Shadow AI in the Enterprise: Risk, Detection, and Governance

April 8, 2026

Walter Write

4 min read

What is shadow AI?

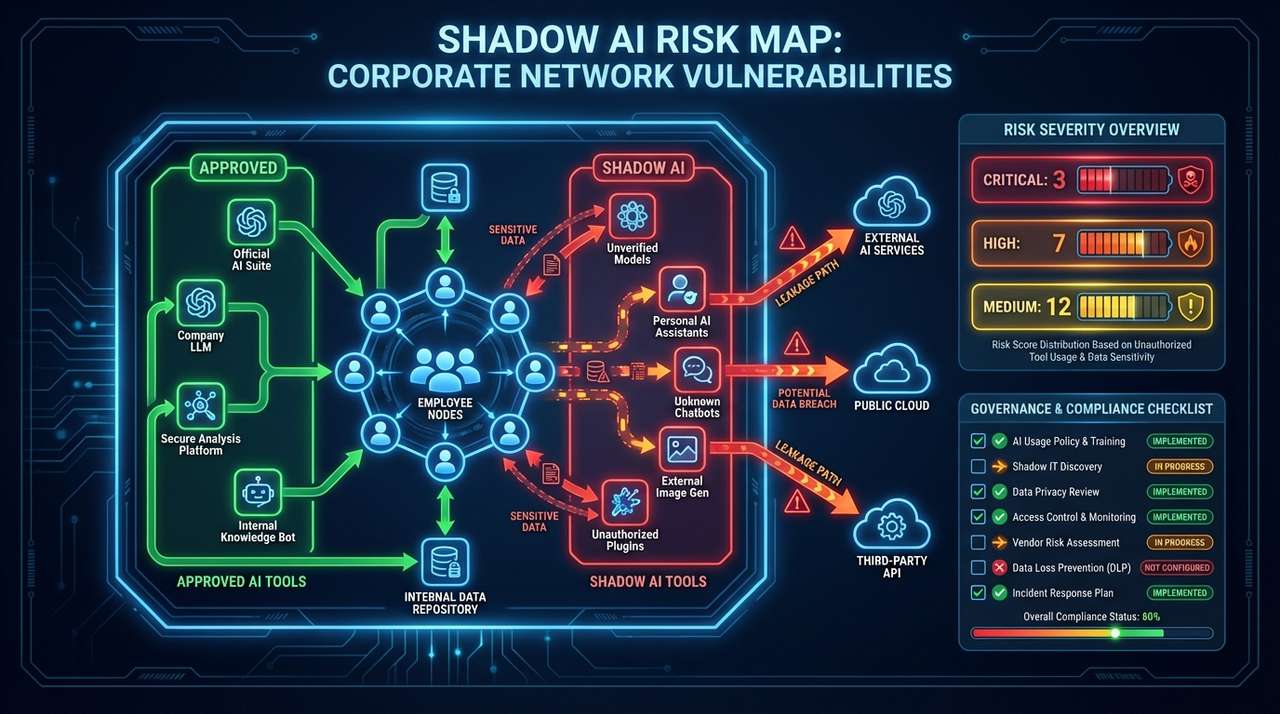

Shadow AI is when employees use unapproved AI tools (personal ChatGPT accounts, Perplexity, Claude.ai, Midjourney, Jasper) for work tasks without IT knowledge or governance. It is the 2026 version of shadow IT, and it is more dangerous because AI tools actively process and potentially store the data employees paste into them.

Why shadow AI is a bigger risk than shadow IT

- Data exfiltration: employees paste customer data, source code, financial projections, and strategic plans into public AI models.

- Compliance violations: GDPR, HIPAA, and SOC 2 controls can be breached by a single prompt containing protected data.

- IP leakage: proprietary algorithms, product roadmaps, and competitive intelligence entered into AI tools may be used for model training.

- Uncontrolled costs: free-tier AI tools convert to paid plans. Nobody tracks the spend centrally.

- Quality risk: AI-generated work product with no review process introduces errors into decisions and deliverables.

How widespread is shadow AI?

In the average tech company, 60-70% of employees use at least one AI tool not provided by the company. The most common shadow AI tools are personal ChatGPT accounts (used by 42% of employees), Perplexity (28%), Claude.ai (18%), and various specialized tools (15%).

How to detect shadow AI

Connect to your identity provider

SSO and identity provider logs show which SaaS tools employees authenticate with. AI tools that appear in SSO but are not on the approved list are shadow AI.

Analyze browser and network signals

Web proxy logs and browser extension data can reveal AI tool usage patterns. Abloomify aggregates these signals without reading content.

Expense and procurement data

Credit card statements and expense reports often contain subscriptions to AI tools that were never approved. These are shadow AI with actual cost.

Building an AI governance framework

The goal is not to block AI usage. It is to channel it through governed systems.

- Step 1: Audit current AI usage (shadow and approved). Know the full landscape.

- Step 2: Define approved AI tools and usage policies by role. Engineering gets code models; Legal gets restricted access.

- Step 3: Deploy an AI gateway (like Abloomify) that provides approved AI access with audit trail, DLP, and model controls.

- Step 4: Migrate shadow AI users to the approved platform. Make it easier to use the approved tool than the shadow one.

- Step 5: Monitor continuously. New shadow AI tools appear every month.

FAQ

Can we just block AI tools?

You can try. It will fail. Employees will use personal devices or find workarounds. The better approach is to provide a governed alternative that is just as good, so they have no reason to go around it.

How does Abloomify help with shadow AI?

Abloomify detects shadow AI usage from SSO, expense, and activity signals. It provides an AI gateway (Bloomy) with full audit trail, model access controls, and DLP so employees can use AI safely within governance guardrails. That sits on the same platform as workforce intelligence and native performance management (Goals & OKRs, reviews, feedback, surveys)—so AI adoption, people programs, and operational metrics stay aligned. See AI governance software.

What about employees using AI on personal devices?

Personal device usage is harder to detect. The governance approach is to make the approved AI platform so useful that employees prefer it. Bloomy, Abloomify’s governed AI workforce agent, answers questions about company data in real time from connected systems—something no personal AI tool can replicate—creating natural adoption gravity.

Bloomy: governed AI with live org context

See how Bloomy combines audit-ready access, model controls, and instant answers grounded in your stack.

See Bloomy in action

Capacity Planning

Do we have capacity to take on the Q3 roadmap?

How can I help?

What to read next

Walter Write

Staff Writer

Tech industry analyst and content strategist specializing in AI, productivity management, and workplace innovation. Passionate about helping organizations leverage technology for better team performance.