Performance Management Tools: 7 That Actually Move Outcomes (2026)

May 6, 2026

Amir Tavafi

12 min read

Most performance management tools in 2026 are doing something strange. They have gotten very good at digitizing the review form, scheduling the check-in, and visualizing the OKR. They have not gotten meaningfully better at telling a leader whether the team is actually doing better work. That is a real gap, and it explains why most of the performance management software shortlists you'll find online look almost identical.

Key Takeaways

Q: What separates a useful performance management tool from a form-based one?

A: A useful tool pulls signals from work tools you already use (GitHub, Jira, Calendar, Slack, Workday) and turns them into observable outcomes. A form-based tool digitizes the review process and asks people to re-enter what already happened. Both have a place, but only the first one tells you whether the team is improving.

Q: Which tools are best for engineering-heavy companies?

A: For pure engineering, Jellyfish is the deepest. For cross-functional companies that also need capacity planning, AI tool ROI tracking, and the ability to ask "who is overloaded right now?", Abloomify covers more surface. The two are not direct substitutes. Jellyfish is engineering-only; Abloomify spans engineering plus operations, IT, and HR signals.

Q: Are AI-powered review drafts actually saving time?

A: Yes, when the AI works from real data instead of free-text. Customer 1, a 50-person SaaS, reported their COO saying "what I did manually this week in a spreadsheet is exactly what I think Abloomify should be doing automatically." Pulling six weeks of PR review activity, decision closure, and meeting load into a draft review cuts manager time on a single review from 45 minutes to under 5.

Q: Do I need to replace my HRIS to use a performance management tool?

A: No. Modern tools integrate with Workday, BambooHR, ADP, Rippling, UKG Pro, and the rest. If a vendor wants you to migrate your HRIS to use their performance product, walk away.

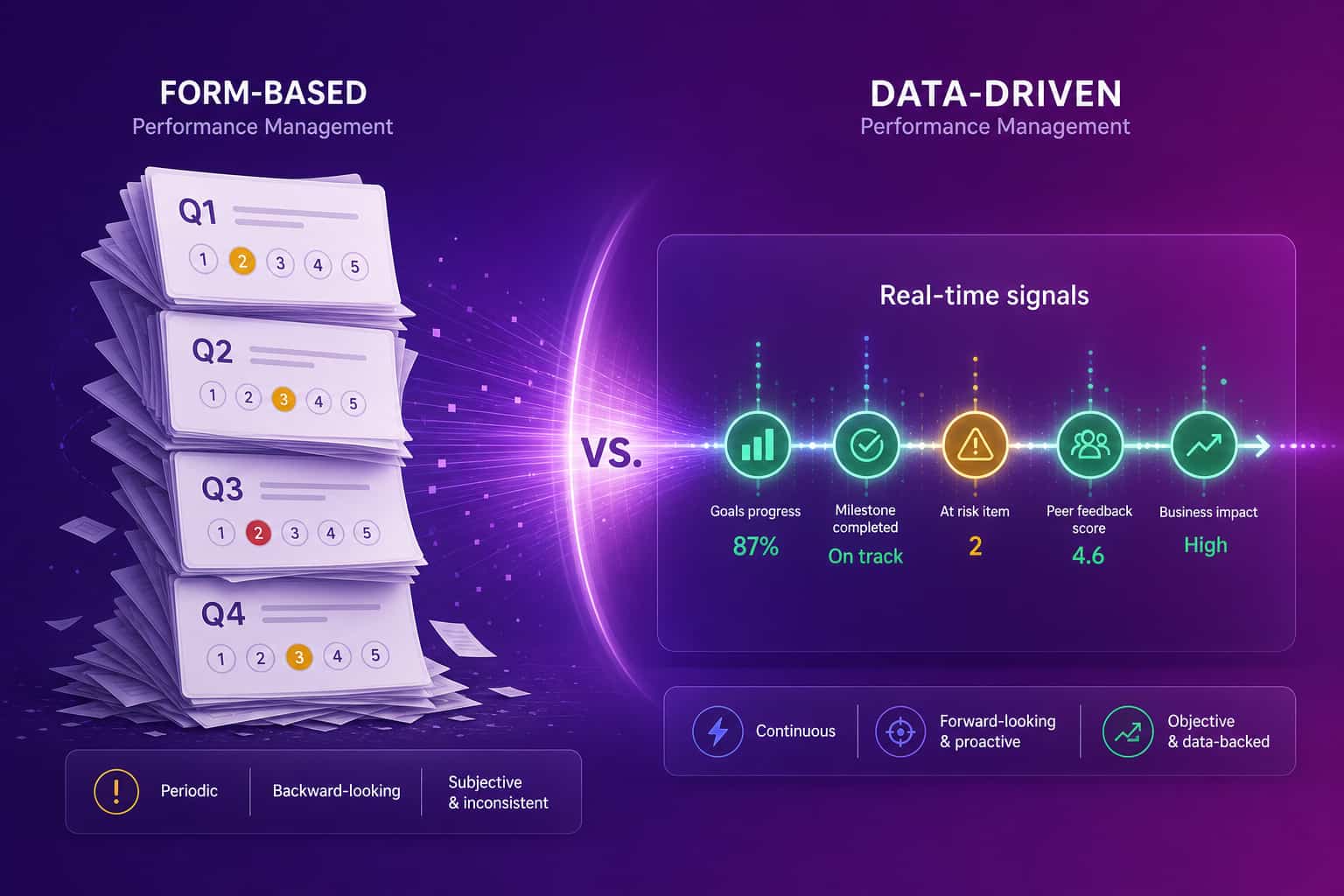

What "performance management tools" actually do in 2026

Performance management tools are software that help leaders set goals, track progress, run reviews, and make compensation and growth decisions about the people on their teams. In 2026, the category has bifurcated into two clearly different products that share the same name. The first is form-based: it digitizes the review cycle, automates the OKR check-in, and centralizes self-evaluations. The second is data-driven: it pulls signals from work itself, from PR cycle times to decision closure rates to meeting cost, and surfaces patterns leaders cannot see from a calendar of forms. Most "best of" lists conflate the two, which is why they read like the same article rewritten with different names. The honest split is that form-based tools are workflow software, and data-driven tools are observability software. You probably need a thin layer of one and a real layer of the other.

The form-based camp leads with names you'll recognize: Lattice, 15Five, Culture Amp, Leapsome, BambooHR. They have polished review templates, decent goal management, and good engagement-survey machinery. The data-driven camp is smaller and newer: Jellyfish for engineering, Abloomify for cross-functional, and a handful of niche tools for specific signals (meeting cost calculators, focus-time analyzers). The split matters because the buying mistake people keep making is treating the form-based tier as if it were the observability tier. It is not. A weekly check-in form is not a measurement system; it is a prompt for one.

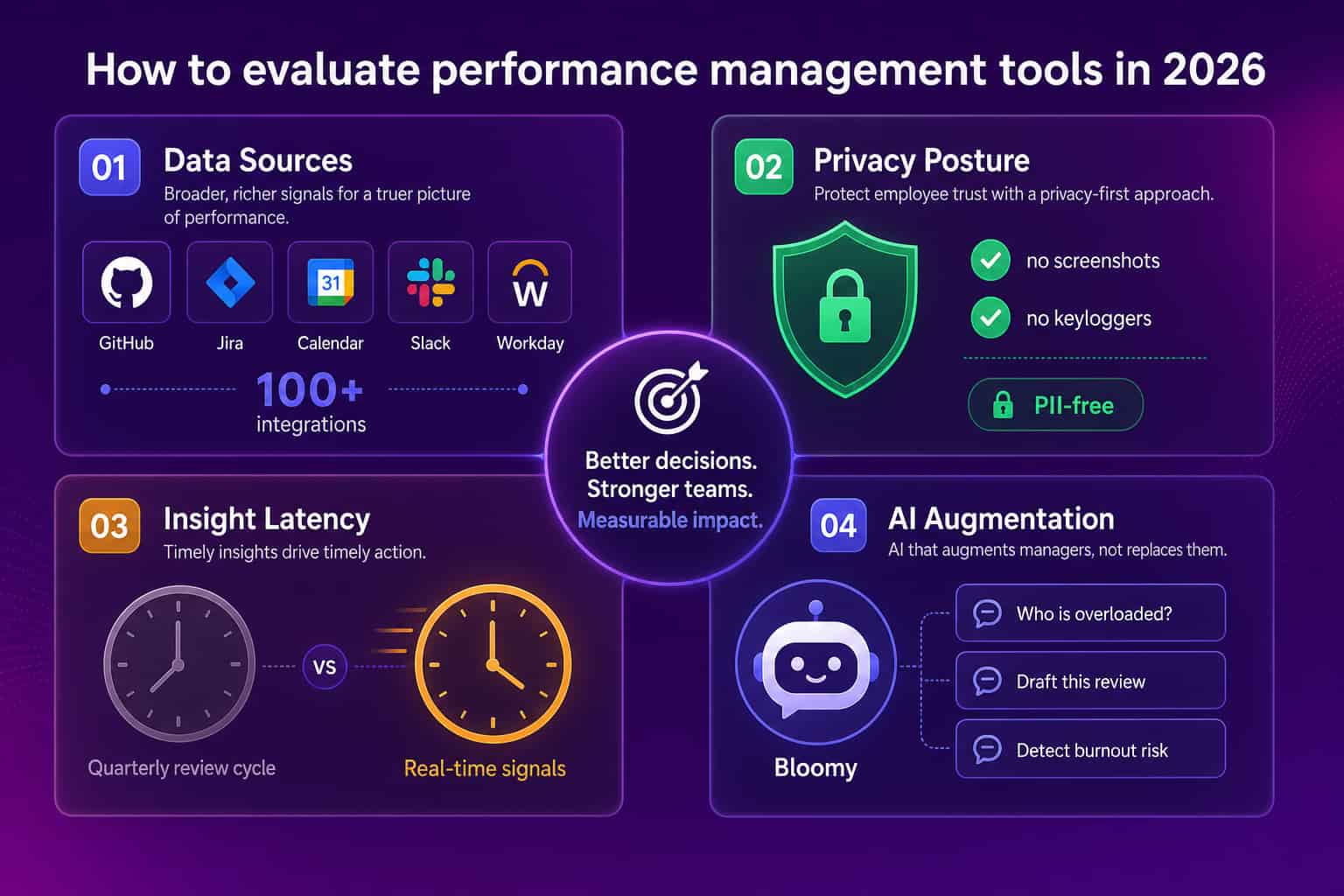

The criteria most reviews don't track

Reviews of performance management tools tend to score on the wrong axes. They count features (templates, integrations, themes), benchmark UX, and rate the engagement survey library. None of those tell you whether the tool will improve the way your leaders make decisions. The criteria that actually matter come down to four questions, each of which most form-based vendors prefer not to answer directly.

The first criterion is data sources. Can the tool pull signals from GitHub, Jira, Calendar, Slack, your CRM, your AI coding assistants, and your HRIS, all without requiring managers to re-enter the same information twice? If the answer is "we have an HRIS integration and a Slack notification," that is not a data source layer; that is a thin pipe.

The second is privacy posture. Screenshots, keystroke logging, and screen recording cost you employee trust faster than they recover any actual insight. The 2026 surveys are unambiguous: roughly 1 in 6 workers say they would quit over surveillance, and the Personnel Psychology meta-analysis found no evidence that monitoring improves performance. A privacy-first tool is not a compliance feature; it is a hiring feature.

The third is insight latency. Quarterly review cycles are not measurement. They are reporting on a measurement that should have happened months ago. The latency a leader needs is closer to 24 hours, and on a few questions ("who is overloaded?", "is review reliability slipping?") it should be closer to real time.

The fourth is AI augmentation done right. The good pattern: AI drafts a review from six weeks of work data, the manager edits for 5 minutes. The bad pattern: AI generates a review out of thin air and asks the manager to rubber-stamp it. Most vendors are now in the second camp because it is easier to ship.

Form-based vs data-driven: a hard look

Both approaches are legitimate. Both have strengths. Most companies need to combine them. Here is the honest comparison.

Form-based (Lattice, 15Five, Culture Amp, Leapsome)

Data-driven (Abloomify, Jellyfish, niche tools)

The companies I've seen get the best results combine the two. They use a form-based tool to run the formal cycle and a data-driven tool to make sure the form-based tool is asking the right questions. If you only have budget for one, pick the layer that matches your bigger gap. If managers are flying blind between cycles, you need data-driven. If you have data but no shared review process, you need form-based.

The 7 tools, ranked by what they actually measure

This list is opinionated. It is ranked by how much real measurement each tool does (data-driven first), not by market share. If you are choosing for a specific use case, the order changes.

1. Abloomify

Best for: cross-functional companies (50–3,500 people) that want engineering velocity, capacity planning, AI tool ROI, and people analytics in one place, without surveillance.

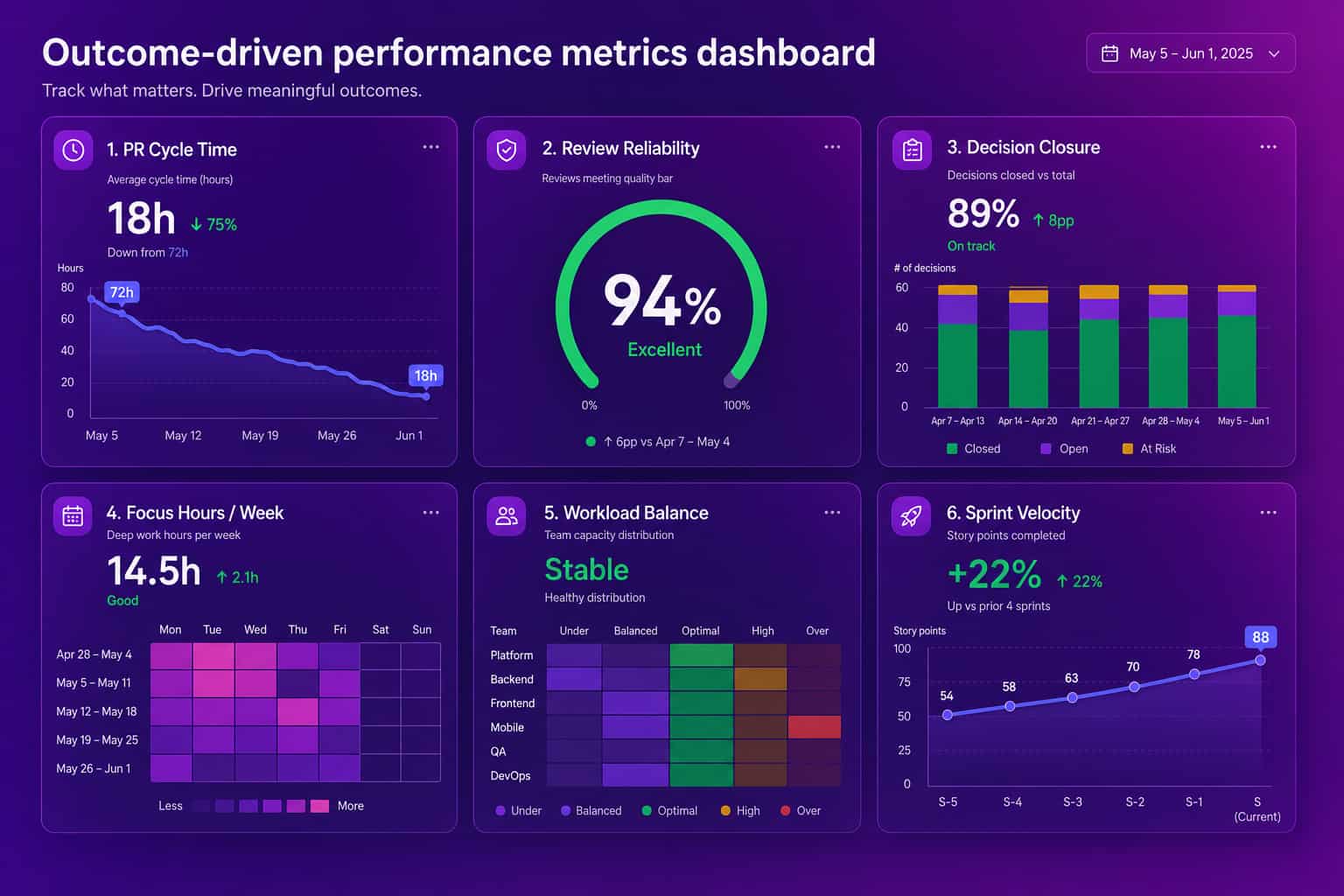

What it measures: PR cycle time, review reliability, decision closure rate, meeting cost and quality, focus hours, AI tool adoption (Cursor, Copilot, Claude Code), capacity utilization. Pulls from 100+ integrations including Jira, GitHub, Google Workspace, Microsoft 365, Slack, Workday, BambooHR, ADP, Rippling, UKG Pro, Gong. Privacy-first: no screenshots, no keyloggers, no content capture. SOC 2 Type 2 certified, with private cloud / BYOC available.

What it does not do: it is not a polished review-form workflow product. If you need beautiful self-evaluation templates and a dozen pre-built engagement surveys, layer Abloomify under a form-based tool. Bloomy, the AI Chief of Staff, drafts performance reviews from real data; a manager edits in 5 minutes.

2. Jellyfish

Best for: engineering organizations of 100+ developers that want deep delivery analytics.

What it measures: PR cycle, sprint velocity, code review patterns, deployment frequency, allocation by initiative. Excellent depth on engineering-only signals.

What it does not do: anything outside engineering. If you also need ops, IT, sales, or HR signals, you will need a second tool. For pure engineering teams, this is the reference point.

3. Lattice

Best for: mid-market companies (200–2,000 people) that want a polished review and OKR platform with strong engagement features.

What it measures: review cycles, OKR progress, 1:1 outcomes, engagement survey scores. Form-based throughout.

What it does not do: pull signals from work tools beyond a thin Slack and HRIS integration. If your goal is observability rather than process, see the Abloomify vs Lattice comparison.

4. 15Five

Best for: companies prioritizing weekly check-ins and continuous feedback workflows.

What it measures: check-in completion, OKR progress, manager 1:1 cadence, recognition. AI-powered review drafts (form-text-based).

What it does not do: measure the work itself. The 15Five model is "ask people, then summarize the answers." See the Abloomify vs 15Five comparison for where each fits.

5. Culture Amp

Best for: HR-led organizations focused on engagement surveys with a performance review layer added.

What it measures: engagement, eNPS, manager effectiveness, performance review cycles. Excellent benchmarking against industry data.

What it does not do: measure performance signals outside surveys. The risk: survey fatigue can produce stale signals that look stable while real outcomes drift.

6. Leapsome

Best for: European-headquartered companies that want a tightly integrated reviews + learning + compensation + engagement bundle.

What it measures: similar to Lattice. Slightly better on learning paths and compensation cycles. Form-based throughout.

What it does not do: anything data-driven. Same fundamental category as Lattice and 15Five.

7. BambooHR (with Performance add-on)

Best for: small and mid-sized businesses (under 200 people) that want a single HRIS plus light performance product, no separate vendor.

What it measures: review cycles, goals, and basic 1:1 documentation, all inside the HRIS.

What it does not do: anything substantial outside the HRIS. Good for under-200 teams who do not yet need a dedicated performance product. Above 500 people the depth runs out fast.

How to evaluate before you buy

A 60-minute evaluation that will save you 6 months of regret. Run it before any demo.

- List your work tools. GitHub, Jira, Slack, Calendar, CRM, HRIS, AI assistants, anything else with daily activity. Count them.

- Ask each vendor: "How many of these do you natively integrate with, and what specific signals do you pull from each?" Anything below 70% coverage means the tool is form-based regardless of how it markets itself.

- Ask: "What does my CTO see at 9 AM Monday without logging into another dashboard?" A real answer involves three or four metrics named specifically. A vague answer means there is no real-time layer.

- Demand a privacy posture statement in writing. "We do not capture screenshots, keystrokes, or content" or "we do." Anything in between is sales fog.

- Run a single review draft side by side. Have the vendor's AI draft a review for one of your engineers using only the data they pull. If the draft reads like a generic form summary, you have your answer.

If your goal is to actually move outcomes (sprint velocity, review reliability, retention, cycle time), you need a tool that measures outcomes. If your goal is to run a clean compensation cycle with documented justifications, you need a tool that helps you write them. Most companies need both. Almost no company needs three.

FAQ

What are performance management tools?

Performance management tools are software that help leaders set goals, track progress, run reviews, and make decisions about people. The 2026 generation splits into form-based platforms that digitize the review cycle (15Five, Lattice, Culture Amp) and data-driven platforms that pull signals from work itself (Abloomify, Jellyfish). Most companies need a thin layer of the first plus a real layer of the second.

What is the difference between performance management software and performance management tools?

In practice, none. The terms get used interchangeably. "Software" tends to imply a single product (Lattice, BambooHR), while "tools" sometimes refers to a stack (a review platform plus an OKR tracker plus an engagement survey). For buyer purposes, treat them as the same category.

Do AI-powered performance management tools actually work?

Some do. The pattern that works: AI summarizes work signals (PRs, meetings, decisions) for managers to review and edit. The pattern that does not: AI auto-generates ratings or reviews from scratch. Bloomy, Abloomify's AI Chief of Staff, sits in the first camp. It drafts a review using six weeks of actual work data; a manager edits in 5 minutes instead of 45.

How do I evaluate a performance management tool?

Four criteria, in order: data sources (can it pull from your work tools), privacy posture (no screenshots or keyloggers), insight latency (quarterly cycles are too slow), and AI augmentation done right (drafts from real data, not generated from nothing).

What are the 5 C's of performance management?

The 5 C's are clarity, communication, calibration, coaching, and consequence. Clarity sets goals; communication keeps them visible; calibration aligns ratings across managers; coaching closes gaps; consequence ties performance to compensation, growth, and exit. Tools can support every C, but only the data-driven category shows whether any of them are working.

Should I replace my HRIS to use a performance management tool?

No. Any modern tool integrates with Workday, BambooHR, ADP, Rippling, UKG Pro, and the rest. If a vendor pushes you to migrate your HRIS to use their performance product, walk away.

Amir Tavafi

Co-Founder & CEO

Product leader and innovator with over 15 years of experience in the tech sector, grounded in AI and robotics. Previously led product development in fraud detection and AI solutions at Nasdaq Verafin.