Best AI Adoption Measurement Tools for Product Teams (2026)

May 2, 2026

Walter Write

5 min read

Key Takeaways

Q: What shows AI is helping product teams?

A: Faster discovery cycles, higher synthesis reuse, clearer decision records (ADRs), more experiments with captured learnings, and measurable customer impact.

Q: How to avoid vanity metrics?

A: Tie assistant usage to outcomes like onboarding/activation and documented decisions, not just artifact counts.

Q: What early targets are reasonable?

A: −25% discovery cycle time and +20% experiment velocity with consistent learnings capture.

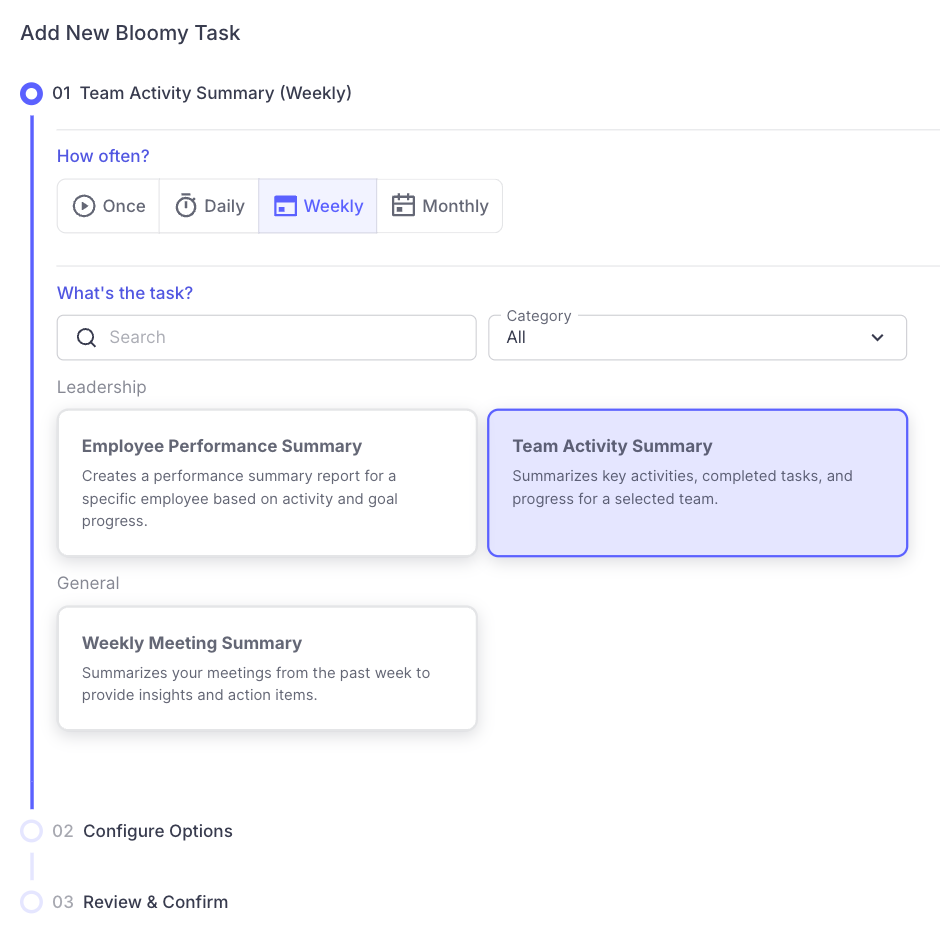

Example: discovery artifacts, decision records, and experiment velocity

Which signals should product teams track?

- Discovery: interviews synthesized, themes, opportunity trees updated

- Decisions: ADRs, alternatives compared, evidence quality

- Experiments: cycle time, sample coverage, learning capture

- Outcomes: onboarding time, activation, retention lift from AI features

How do tools compare at a glance?

| Capability | Abloomify Product Analytics | Research Platforms | Experiment Platforms |

|---|---|---|---|

| Adoption coverage | Team/initiative | Synthesis usage | Tests/velocity |

| Outcome correlation | Effort → PM outcomes | Partial | Partial |

| Governance | Policies/notes | N/A | N/A |

What targets are reasonable?

- −25% discovery cycle time; +30% synthesis reuse

- +20% experiment velocity with documented learnings

Abloomify correlates discovery/delivery effort with outcomes, so product leaders can guide AI investments with evidence. Explore solutions/continuous-improvement.

How should we choose product AI measurement tools?

Unify research platforms, issue trackers, experiment systems, and analytics so you can track discovery throughput, decision quality, and outcomes.

- Discovery artifacts: interviews synthesized, themes created, opportunity tree updates

- Decision records: ADR completeness, alternatives compared, evidence quality

- Experiment velocity: cycle time, sample coverage, documented learnings

- Customer outcomes: activation, retention, and onboarding improvements from AI features

- Lightweight setup and exports to your analytics stack

What is our 8‑week rollout plan?

Week 1: Baseline discovery throughput and ADR usage for one initiative.

Week 2: Ship prompt packs for synthesis and decision templates; tag assisted artifacts.

Week 3: Launch one experiment with clear measures; track cycle time.

Week 4: Snapshot results; coach on evidence quality and alternatives.

Week 5–6: Add a second initiative; share learnings in a weekly note.

Week 7: Connect to product analytics; attribute onboarding/activation lift.

Week 8: Executive checkpoint; standardize reporting across initiatives.

Week 2: Ship prompt packs for synthesis and decision templates; tag assisted artifacts.

Week 3: Launch one experiment with clear measures; track cycle time.

Week 4: Snapshot results; coach on evidence quality and alternatives.

Week 5–6: Add a second initiative; share learnings in a weekly note.

Week 7: Connect to product analytics; attribute onboarding/activation lift.

Week 8: Executive checkpoint; standardize reporting across initiatives.

What pitfalls should we avoid, and how do we fix them?

- Counting documents → measure decisions and outcomes, not just artifacts.

- Stale opportunity trees → schedule monthly updates tied to discovery throughput.

- Lost learnings → require a “one‑slide” summary per experiment.

FAQ

Q: How do we avoid building AI features that don’t stick?

A: Tie discovery to activation/retention metrics and run small experiments before full builds.

Q: Can we compare initiatives fairly?

A: Use normalized measures of discovery throughput and ADR quality, with context on complexity.

See a discovery‑to‑outcomes dashboard at request-demo.

What does “good” look like by initiative?

Discovery heavy

- Interviews synthesized/week; opportunity tree updates; reuse of insights

- Decision records with alternatives and evidence

Experiment heavy

- Cycle time per test; sample coverage; documented learnings reused

- Activation or onboarding lift tied to experiments

Rollout and adoption

- Feature adoption and retention; support themes addressed

- Product update notes quality and clarity

What operating cadence keeps momentum?

- Weekly: discovery throughput and ADR snapshot per initiative.

- Bi‑weekly: experiment review; capture one‑slide learnings.

- Monthly: decision quality review; compare alternatives and evidence.

- Quarterly: outcomes review for activation and retention tied to AI features.

What does our measurement glossary include?

- Opportunity tree: structured view of problems and bets.

- ADR: architectural or product decision record with context and trade‑offs.

- Discovery throughput: rate of validated insights and updates.

- Learning capture: quality of documentation from experiments.

- Activation: time to first value for a target segment.

- Retention: continued usage across periods for the feature.

What did a pilot achieve?

A product trio focused AI on onboarding. They accelerated discovery synthesis, documented ADRs with clear alternatives, and ran three small experiments. Within one quarter, time‑to‑activation improved 17 percent and synthesis reuse meant fewer duplicate interviews. The team kept decisions auditable and shared learnings broadly.

FAQ

Q: How do we keep discovery from becoming a document factory?

A: Require crisp first sentences with entities, reuse insights, and measure decisions and outcomes, not just artifact counts.

Q: What if experiments take too long?

A: Reduce scope and sample size, keep success metrics narrow, and capture one‑slide learnings to decide quickly.

Q: How do we align stakeholders on decisions?

A: Use ADRs with alternatives, evidence, and trade‑offs; link to synthesized research and experiments.

What’s our definition‑of‑done checklist?

- □Discovery throughput and ADR quality tracked per initiative

- □One experiment completed with documented learnings

- □Activation or onboarding metric linked to feature changes

- □Monthly decision review with stakeholders

- □Internal link to research repository and analytics dashboard

Ask Bloomy any AI adoption question and get answers from live data, instantly.

What are the next steps?

Choose one onboarding initiative and run the discovery → decision → experiment loop for eight weeks. Track activation lift and capture learnings, then decide whether to scale the approach to a second initiative.

Which data sources and integrations do we use?

- Research platforms for interviews and synthesis artifacts

- Issue trackers for delivery signals and experiment tasks

- Experiment platforms and product analytics for outcomes

- Knowledge base for reusable insights and decision archives

What targets are reasonable for pilots?

- −25% discovery cycle time with synthesis reuse up

- +20% experiment velocity with one‑slide learnings captured

- Activation improved for the target segment with supporting evidence

What leadership reporting should we use?

Share a monthly one‑pager per initiative: discovery throughput, decision quality highlights (best ADRs), experiments run and learned, and the impact on activation or retention. Keep it readable so stakeholders can make investment calls quickly.

Prefer to see a live discovery‑to‑outcomes workflow wired to your tools? Start at request-demo.

With a clear loop and shared definitions, product, design, and engineering make faster decisions and retire ideas that don’t move activation or retention.

Walter Write

Staff Writer

Tech industry analyst and content strategist specializing in AI, productivity management, and workplace innovation. Passionate about helping organizations leverage technology for better team performance.