Best AI Adoption Measurement Tools for Data & Analytics (2026)

April 10, 2026

Walter Write

5 min read

Key Takeaways

Q: How do data teams prove AI impact?

A: Track assisted query rates, time‑to‑first‑insight, reuse of certified assets, governance workflow adherence, and cost‑to‑answer.

Q: What creates trustworthy acceleration?

A: A strong semantic layer, certified metrics, cache strategies, and approval workflows with model cards.

Q: Where to aim in quarter one?

A: −25–40% time‑to‑first‑insight and +30% reuse of trusted assets in governed domains.

Which signals should data teams track?

- Assisted query/build rates and time‑to‑first‑insight

- Reuse of certified datasets, metrics, and semantic models

- Model card completeness and approval workflow adherence

- Cost‑to‑answer (compute per insight), cache hit rates

How do tools compare at a glance?

| Capability | Abloomify Analytics | BI/Semantic Layer | Data Platform Governance |

|---|---|---|---|

| Adoption coverage | Team/domain | Asset reuse | Approvals/lineage |

| Outcome correlation | Effort → time‑to‑insight | Partial | No |

| Governance | Policy & approvals | Partial | Yes |

What targets are reasonable?

- −25–40% time‑to‑first‑insight in governed domains

- +30% reuse of certified assets

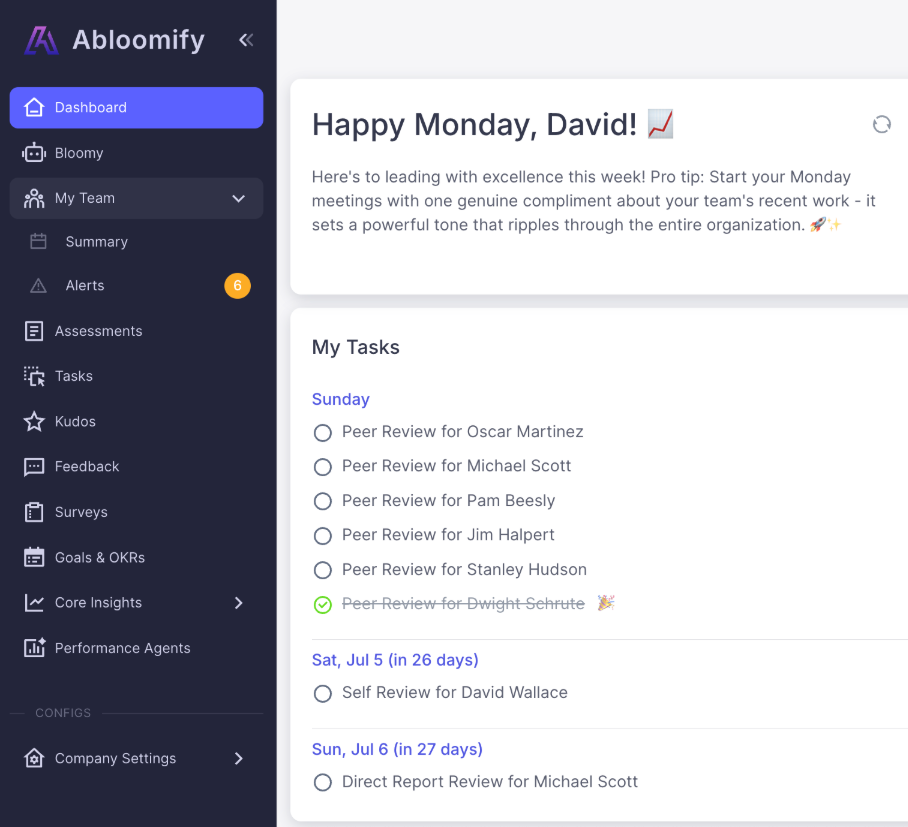

Abloomify ties assistant usage to outcomes without exposing raw data. Bloomy, our AI Chief of Staff, gives analytics leaders instant answers about AI adoption and ROI across connected tools. See product or request-demo.

How should we choose analytics AI measurement tools?

Connect your BI/semantic layer, data platform governance, and assistant analytics so you can measure time‑to‑insight and governed reuse credibly.

- Assisted query/build rates and time‑to‑first‑insight

- Reuse of certified assets and semantic models

- Approval workflows, model cards, and lineage

- Cost‑to‑answer with cache strategies

- Domain and role‑based access; export to lakehouse

How should we roll out and measure in 8 weeks?

Week 1: Baseline time‑to‑insight and reuse for one domain.

Week 2: Ship prompt packs and semantic model guides; tag assisted work.

Week 3: Add approvals for sensitive datasets; publish cache guidance.

Week 4: Snapshot results; highlight quick wins and anti‑patterns.

Week 5–6: Expand to a second domain; standardize certified metrics.

Week 7: Review cost‑to‑answer and optimize cache/compute.

Week 8: Executive checkpoint; scale with clear governance.

Week 2: Ship prompt packs and semantic model guides; tag assisted work.

Week 3: Add approvals for sensitive datasets; publish cache guidance.

Week 4: Snapshot results; highlight quick wins and anti‑patterns.

Week 5–6: Expand to a second domain; standardize certified metrics.

Week 7: Review cost‑to‑answer and optimize cache/compute.

Week 8: Executive checkpoint; scale with clear governance.

What pitfalls should we avoid, and how do we fix them?

- Ad‑hoc dashboards → require reuse of certified assets.

- Slow reviews → set lightweight approvals for low‑risk changes.

- Cost spikes → monitor compute per insight and tune caching.

FAQ

Q: How do we keep LLM answers consistent with metrics?

A: Use a semantic layer and certified metrics; route assistant prompts through that context.

Q: Can we measure time‑to‑insight automatically?

A: Yes, track from request creation to first accepted chart or dashboard saved.

Start with one governed domain and grow; book request-demo.

What does “good” look like by use case?

Executive dashboards

- Time from question to accepted chart; reuse of certified metrics

- Consistency of definitions across functions

Self‑service exploration

- Assisted query rates; cache hit ratios; time‑to‑first‑insight

- Approvals for sensitive joins; lineage completeness

Operational BI

- SLA adherence for refreshed datasets; incident rate for pipelines

- Cost‑to‑answer down via caching and partitioning

What operating cadence keeps momentum?

- Weekly: domain adoption/value snapshot; highlight two assisted wins.

- Monthly: governance review for approvals and lineage; fix gaps.

- Quarterly: cost‑to‑answer audit and cache/compute tuning.

What does our measurement glossary include?

- Time‑to‑first‑insight: elapsed time from request to accepted visualization.

- Assisted query rate: share of queries authored with assistant help.

- Certified metric: governed definition that is reused across teams.

- Model card: documentation for data products or ML models.

- Lineage: upstream/downstream dependencies for assets.

- Cost‑to‑answer: compute cost incurred to deliver an answer.

- Cache hit rate: percent of queries served from cache.

What did a pilot achieve?

An analytics team piloted governed assistants on a revenue domain. Time‑to‑first‑insight fell 35 percent while reuse of certified metrics increased by 28 percent. Lightweight approvals and model cards kept trust high, and cost‑to‑answer dropped after adopting cache strategies for common questions.

FAQ

Q: How do we prevent metric drift across teams?

A: Centralize definitions in the semantic layer, require certified metrics for exec dashboards, and track reuse.

Q: What about ad‑hoc explorations?

A: Allow them, but encourage saving accepted charts and tagging datasets to improve reuse and governance.

Q: How do we balance speed and cost?

A: Use caching for common questions, set sensible compute quotas, and monitor cost‑to‑answer per domain.

What’s our definition‑of‑done checklist?

- □Domain baseline for time‑to‑insight and reuse captured

- □Assisted prompts routed through the semantic layer

- □Approvals and model cards in place for sensitive assets

- □Cache guidance published; cost‑to‑answer monitored

- □Quarterly governance and performance review completed

What are the next steps?

Pick one domain, route assistant prompts through the semantic layer, and baseline time‑to‑insight. After eight weeks of operating cadence and approvals, compare reuse and cost‑to‑answer before expanding.

Which data sources and integrations do we use?

- BI/semantic layer (Looker/semantic models, dbt metrics)

- Data platform governance for approvals, lineage, and catalogs

- Warehouse/lakehouse for cost and performance metrics

- Collaboration tools for sharing and reuse tracking

What targets are reasonable for pilots?

- −25–40% time‑to‑first‑insight in the chosen domain

- +30% reuse of certified datasets/metrics

- Cost‑to‑answer down by 15% with cache guidance

What leadership reporting should we use?

Publish a monthly domain summary: time‑to‑first‑insight, reuse of certified metrics, approval backlog, and cost‑to‑answer. Highlight two decisions made faster thanks to governed assistants.

See how governed assistants reduce time‑to‑insight without sacrificing trust. Request a guided demo at request-demo.

Pair that demo with one domain owner and a data product lead, so you can leave with an eight‑week plan and a shortlist of metrics to track from day one.

As adoption grows, standardize how teams save and tag insights, and promote the best patterns in an internal “answers library.” This keeps speed gains compounding without fragmenting definitions.

Finally, document ownership for each certified dataset and metric so questions have a clear path to resolution and trust remains high as usage scales.

Walter Write

Staff Writer

Tech industry analyst and content strategist specializing in AI, productivity management, and workplace innovation. Passionate about helping organizations leverage technology for better team performance.